Sign Language Phonology

After one of the Bampton lectures at Columbia in 1986, a young member of the audience approached him (Zellig Harris) and asked what he would take up if he had another lifetime before him. He mentioned poetry, especially the longer works of the 19th century poets like Browning. He mentioned music. And he mentioned sign language.

-Bruce Nevin, "A Tribute to Zellig Harris"

Introduction

Linguists have been drawn to the study of signed languages for about 35 years because of the challenges they pose to our theoretical tools as we attempt to deal with a natural language that uses vision rather than audition. It is important to consider what the state of our knowledge about American Sign Language (ASL) is, since signed languages also offer unique opportunities for testing ideas about the nature of language itself, ideas generally formulated exclusively from observations about spoken language. Our task as ASL phonologists is to ascertain which are the minimal units of the system, which aspects of this signal are contrastive, and how these units are constrained by the sensory systems that produce and perceive them. Of all the items of the list of differences and similarities between signed and spoken languages the areas that present the most striking divergences occur in morphophonemics and phonology. The interface between morphology and phonology is indeed different, given the freedoms and constraints available to the system. (Diane Brentari)

Definition of Sign Language

A system of human communication whose character is like that of a spoken language, except that it is through gestures instead of sounds. Thus the systems used especially by the deaf, such as British Sign Language (BSL), or American Sign Language (ASL or Ameslan).

To be distinguished, as productive systems with their own rules and structures, from gestural transcriptions of spoken language, e.g. in semaphore, or limited system of hand signals, as used e.g. in directing traffic.

The articulatory means of sign languages are the hands and arms, the body, the head, and the muscles of the face, in particular the muscles around the eyes, the brows and the mouth, and eye movements. The hands produce the lexemes, often jointly with the mouth.

Sign Structure: Phonetics and Phonology in Sign Language

Sometimes termed ‘chirology’ (from the Greek cheir ‘hand’), the study of the constituents of signs has been one of the major concerns of linguistic research since the 1960s. The term ‘phonology’ is used in the context of sign language research to emphasize the parallels in structure between spoken and sign languages at this level. Before Stokoe (1960), signs had been regarded as unanalyzable, unitary gestures, and therefore as containing no level analogous to the phonological. Stokoe’s contribution was to recognize that American Sign Language (ASL) signs could be viewed as compositional, with subelements contrasting with each other, and thus unlike gestures. More recent research has sought to apply approaches to phonological theory in spoken languages, such as autosegmental phonology, to sign structure.

1. Arbitrariness in Phonology

A major issue for sign language phonologists is whether there is meaning at the sublexical level. Signs with shared sublexical features (e.g., handshape or location) often share some features of meaning. Many signs located at the forehead relate to cognitive activity (THINK, DREAM, LEARN, etc.). In British Sign Language (BSL), the handshape of little finger extended from the fist is found in such signs as BAD, ILL, END, etc., while signs made with the handshape of thumb extended from the fist include GOOD, RIGHT, AGREE, and so on.

2. Iconicity

It is perhaps not surprising that visual languages exhibit more iconicity than auditory languages – objects in the external world tend to have more visual than auditory associations. It is important to emphasize that while sign languages may not show an arbitrary link between symbol and referent or form and meaning, this link is as conventionalized as in spoken languages. It is also important to note that just as speakers of English may not be aware of the sound symbolism in such words as ‘wring,’ writhe,’ wrist,’ etc., so too signers may not be aware of the iconic origins of signs. There remains a great deal of research to be done on the role and status of iconicity in sign language.

3. Constraints on Sign Form

Constraints on sign forms arise from two sources: physical limitations and language-specific restrictions. Battison (1978) proposes two constraints on sign form in ASL which also appear to hold for other sign languages. The ‘symmetry condition’ states that if both hands move in a two-handed sign, they must both have the same hand-shape and the same movement. The ‘dominance condition’ states that when the location of a sign is a passive hand, the handshape of the passive hand must either be identical to that of the active hand, or be one of a set of unmarked handshapes. It is of interest to note that while it is common to see two hands with different handshapes, in different locations, and with different movements, such structures always reflect some syntactic, rather than lexical form.

Phonological processes operate on the citation forms of signs; amongst those studied are change of location and deletion of hand. Signs tend to move towards the center of signing space and for contact with a location to be lost. It is also common for one hand to be deleted in two-handed signs. Liddell and Johnson (1985) discuss at length a whole series of phonological processes in ASL, including movement epentheses, metathesis, gemination, perseveration, and anticipation.

4. Borrowing from Spoken Languages

All signers live among hearing populations using spoken languages, and have some degree of access to the language of the hearing population. This contact is manifested in a number of areas, e.g., fingerspelling, and loan-translations.

1. Simultaneous Units

The first attempt by Stokoe (1960) and Stokoe, Casterling, and Croneberg (1965) to analyze lexical items into phonemes rejected the assumption imported from spoken-language phonology that sequential organization must be the most important way that signs are constructed. Stokoe proposed that we should look instead at the principal components of signs as they present lexical contrast, and he concluded that these units were simultaneously, rather than sequentially, organized.

2. Sequential Units

The notion of simultaneous organization of underlying structure in ASL was argued against, and indeed displaced, during the 1980s. Newkirk (1981), Liddell (1984), Liddell and Johnson (1986, 1989) and Johnson and Liddell (1984) presented arguments for sequential underlying structure in ASL.

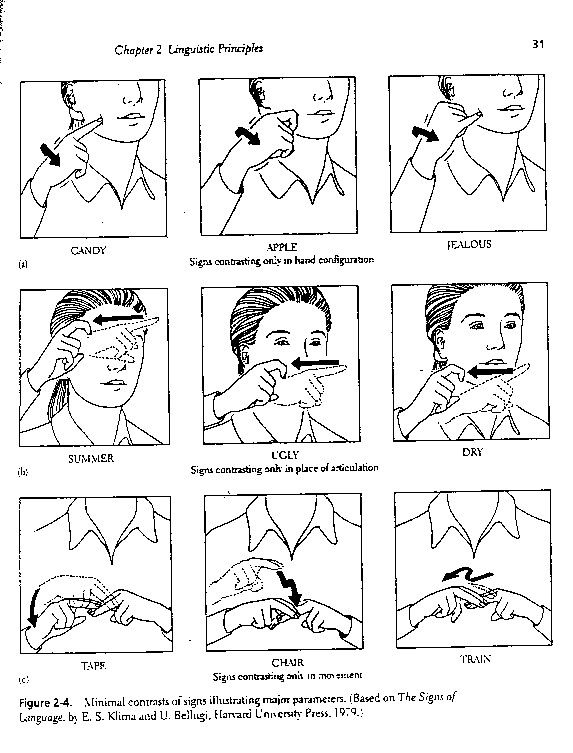

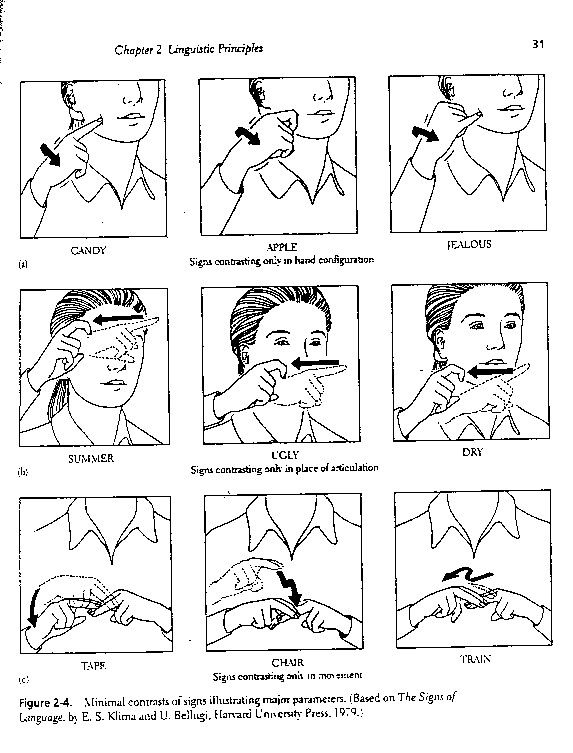

The 3 major parameters of signs are hand configuration, Place of articulation, and movement (Stokoe, Casterline, & Croneberg, 1976). Stokoe and colleagues have identified 19 different values of hand configuration, or handshapes. There include an open palm, a closed fist, and a partially closed fist with the index finger pointing. Place of articulation, which has 12 values, deals with whether the sign is made at the upper brow, the cheek, the upper arm, and so on. Movement refers to whether the hands are moving upward, downward, sideways, toward or away from the signer, in rotary fashion, and so on, and includes 24 values. Although there values are meaningless in themselves, they are combined in various ways to form ASL signs. Thus, ASL has duality of patterning.

Figure 2-4 shows a series of minimal contrasts involving these three parameters. The top row shows three signs that differ only in hand configuration (that is, the signs are identical in place of articulation and movement). The second and third rows show minimal contrasts for place and movement, respectively. Notice how a change in a single parameter value can change the entire meaning of a sign.

It is also possible to analyze parameter values into distinctive features. Two such features for handshapes are index, which refers to whether the index finger is extended, and compact, which refers to whether the hand is closed into a fist. Among the signs in the top line of Figure 2-4, candy is +index, -compact, apple is +index and +compact, and jealous is –index and –compact. To determine whether signers’ perceptions of ASL are related to features such as these, Lane, Boyes-Braem, and Bellugi (1976) presented deaf individuals with a series of signs under conditions of high visual noise (a video monitor with a lot of “snow”). The participants sere asked to recognize the signs of the monitor. The researchers found that the large majority of recognition errors involved pairs of signs that differed in only one feature. That is, signs with similar patterns of distinctive features were psychologically similar to one another. (from Psychology of Language. 1999.)

Errors occur in signing are strongly resemble those found with speech.

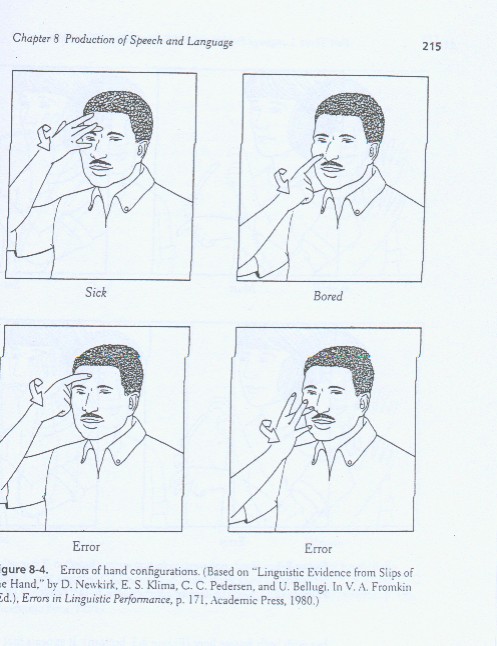

Newkirk, Klima, Pedersen, and Bellugi (1980) have found some fascinating evidence that slips of the hand similar to slips of the tongue take place with deaf signers. They used a corpus of 131 errors, 77of which came from videotaped signings and 54 of which were reported observations from informants or researchers. 98neous self-correction or by subsequent viewing of the videotapes.

Independence of Parameters: Newkirk and colleagues analyzed the errors in terms of the parameters of American Sigh Language-hand configuration, place of articulation, and movement-to assess whether sign parameters also appear to be independent units of production.

The researchers found errors analogous to exchanges, anticipations, and per-intended to sign sick, bored (similar to the English I’m sick and tired of it). This intended production can be described in the following way:

Sick

Hand configuration: hand toward signer

Place of articulation: at forehead

Movement: with twist of wrist

Bored

Hand configuration: straight index finger withhand toward signer

Place of articulation: at nose

Movement: with twist of wrist

What the signer actually produced was the sign for sick with the hand configuration for bored and vice versa. The other two parameters were not influenced. Overall, Newkirk and colleagues found 65 instances of exchanges involving hand configuration, of which 49 were “pure” cases (that is, ones in which no other parameter was in error). In addition, 9 of 24 errors related to place and movement parameters were single-parameter errors. These cases provide evidence that ASL signs are not holistic gestures without internal structure; rather, they are subdivided into parameters that are somewhat independent of each other during sign language production.

In general, slips of the hand strongly suggest that similar principles of organization underlie signed and spoken language, pointing to the possibility that both types of language take the form that they do because of basic cognitive limits on how (or how much) linguistic information may be structured or used. In contrast, some recent studies of the rate at which signs and speech are produced point to some equally interesting discrepancies between the two modes.

(from Psychology of Language. 1999.)

1. Brentari, Diane.

2001. Foreign Vocabulary in Sign Languages : A Cross-Linguistic Investigation of Word Formation. Publisher: Lawrence Erlbaum Association.

1999. A Prosodic Model of Sign Language Phonology. Publisher: MIT Press.

2. Stokoe, William C.

1980. Sign & Culture, A Reader for Students of American Sign Language. Publisher: Linstok Press.

1976. Dictionary of American Sign Language on Linguistic Principles. Publisher: Linstok Press.

1972. Semiotics and Human Sign Languages. Berlin:

Walter de Gruyter, Inc.

3. Wendy Sandler

1989. Phonological Representation of the Sign : Linearity and Nonlinearity in American Sign Language. Berlin: Walter de Gruyter, Inc.

Links

1. About ‘Father of ASL’-Dr. William C. Stokoe, Jr.

http://dww.deafworldweb.org/pub/s/stokoe.html

2. Prosody in Sign Language-an online article by Wendy Sandler

http://www.sign-lang.uni-hamburg.de/intersign/Workshop2/Sandler.html

3. From Phonetics to Discourse: The Nondominant Hand and the Grammar of Sign Language-an online article by Wendy Sandler

http://www.ling.yale.edu:16080/labphon8/Talk_Abstracts/Sandler.html

4. 高雄市手語協會-手語教學區

http://www.dra.org.tw/teaching-v.htm

5. 多國聾啞人手語教學

http://new32.3322.net/sign/sign/sign.html

1. Do you think that sign languages fro the deaf have phonologies?

ANS.: Yes, the phonological units are expressed by gestures, but not by human vocal sound. There are many sign languages in the world, and there is no genetic relationship between the dominant sign language and the dominant spoken language in any community. They are entirely comparable functionally and in terms of processing speed. A person who is deaf at birth and does not learn a sign language will be linguistically and cognitively deprived in the same way as any hearing person and artificially prevented from learning a spoken language. It is only recently that research into the morphosyntactic and phonological structure of sign languages has got off the ground.

References

Asher, R. E. Ed. 1994. The Encyclopedia of Language and Linguistics. Pergamon Press.

Allwood, Jens. & Peter Gardenfors. (Ed.). 1999. Cognitive Semantics: Meaning and Cognition. John Benjamins.

Carroll, David W. 1999. Psychology of Language. California: Brooks/Cole Publishing Company.

Goldsmith, John A. 1995. The Handbook of Phonological Theory. Oxford: Blackwell.

Gussenhoven, Carlos. & Haike Jacobs. 1998. Understanding Phonology. Oxford Uni. Press.

Malmekjaer, Kirsten. Ed. 1991. The Linguistics Encyclopedia. London: Routledge.

Matthews, P.H. Concise Dictionary of Linguistics. Oxford Uni. Press.